Assessing the user experience (UX) of online museum collections: Perspectives from design and museum professionals

Craig MacDonald, Pratt Institute, USA

Abstract

Studies show that online museum collections are among the least popular features of a museum website, which many museums attribute to a lack of interest. While it’s certainly possible that a large segment of the population is simply uninterested in viewing museum objects through a computer screen, it is also possible that a large number of people want to find and view museum objects digitally but have been discouraged from doing so due to the poor user experience (UX) of existing online-collection interfaces. This paper describes the creation and validation of a UX assessment rubric for online museum collections. Consisting of ten factors, the rubric was developed iteratively through in-depth examinations of several existing museum-collection interfaces. To validate the rubric and test its reliability and utility, an experiment was conducted in which two UX professionals and two museum professionals were asked to apply the rubric to three online museum collections and then provide their feedback on the rubric and its use as an assessment tool. This paper presents the results of this validation study, as well as museum-specific results derived from applying the rubric. The paper concludes with a discussion of how the rubric may be used to improve the UX of museum-collection interfaces and future research directions aimed at strengthening and refining the rubric for use by museum professionals.Keywords: virtual museum, digital collections, interface design, evaluation, UX, usability

1. Introduction

New technologies have greatly enhanced the in-person experience for museum visitors (e.g., interactive displays; van Dijk, Lingnau, & Kockelkorn, 2012), but the virtual museum experience—delivered via the museum website—is still a critical concern for museum professionals. A typical feature of museum websites is the online collection, which was initially conceived as a way to provide subject-matter experts with convenient access to museum holdings without needing to be physically present (Rayward & Twidale, 1999; Jones, 2007). However, studies have shown that online museum collections are among the least popular features of a museum website (Haynes & Zambonini, 2007; Fantoni & Stein, 2012). Many museum experts attribute this low popularity to a lack of interest, leading museums to instead focus on using the website to facilitate in-person visits (with some notable exceptions; e.g., Gorgels, 2013). While it’s certainly possible that a large segment of the population is simply uninterested in viewing museum objects through a computer screen, there is an alternate explanation that should also be considered: a large number of people want to find and view museum objects digitally but have been deterred from doing so due to the poor user experience (UX) of existing online-collection interfaces.

Usability has long been a goal of online museums, but modern online interfaces must go beyond simply providing access to digital artworks and focus on delivering positive emotional outcomes (Hassenzahl & Tractinsky, 2006). To that end, this paper details the creation of a rubric for assessing the UX of online museum collections, starting with a definition of rubrics and a discussion of the iterative development of the rubric. Next, the paper discusses the results of a study examining the rubric’s reliability, validity, and utility and outlines the next steps to adapt the rubric for use by museum professionals.

2. Literature review

To frame the remainder of the paper, the literature review covers two broad areas: assessment rubrics and user experience evaluation.

2.1 Assessment rubrics

Because the term rubric has various meanings, for our purposes we will consider a rubric to mean simply the “criteria for assessing… complicated things” (Arter & McTighe, 2001). From an educational perspective, rubrics are scoring tools that list the criteria by which something is assessed and that articulate gradations of quality for each criterion (Goodrich, 1997; Hafner & Hafner, 2003). In its most basic form, a rubric contains three primary components, set out on a grid: a rating scale, a list of dimensions, and descriptions of each level of performance for each dimension (Stevens & Levi, 2013). This basic structure is presented in table 1.

|

Scale Level 1 |

Scale Level 2 |

Scale Level 3 |

|

| Dimension 1 | description | description | description |

| Dimension 2 | description | description | description |

| Dimension 3 | description | description | description |

Table 1: basic rubric grid format (adapted from Stevens & Levi, 2013)

Experts typically split rubrics into two groups: holistic rubrics and analytic rubrics. Holistic rubrics, as the name implies, look at the product or performance as a whole and therefore contain just one dimension (e.g., “overall performance” or “overall quality”). Analytic rubrics, like the example in figure 1, split a product or performance into its component parts, allowing for feedback on multiple dimensions (Quinlan, 2012).

Rubrics are not without controversy (Hafner & Hafner, 2003), but there is general agreement that rubrics offer four advantages over other assessment methods:

- Efficiency: Rubrics streamline the assessment process and reduce the need for explaining why a particular score was given (Goodrich, 1997; Stevens & Levi, 2013).

- Transparency: Rubrics clearly define “quality” in objective and observable ways, which promotes transparency (Jonsson & Svingby, 2007; Quinlan, 2012).

- Reflectiveness: Rubrics promote reflection about how and why improvements can be made, rather than prescribe specific fixes (O’Reilly & Cyr, 2006; Jonsson & Svingby, 2007; Stevens & Levi, 2013).

- Ease of Use: Applying a rubric is nearly as simple as completing a form, and a completed rubric is a ready-made tool for communicating assessment results (Goodrich, 1997; Hafner & Hafner, 2003).

It is important to note that rubrics are not inherently “good” or “bad,” but instead should be seen as a tool that can provide a great deal of value when used appropriately (Turley & Gallagher, 2008).

2.2 User-experience evaluation

Usability testing is the most common method for evaluating user interfaces, but experts have acknowledged that it may not be the best approach for capturing the complexity and ambiguity of UX (Bargas-Avila & Hornbæk, 2011; MacDonald & Atwood, 2013). If we accept the general view of UX—that it is “dynamic, context-dependent and subjective” (Law et al., 2009)—then we must recognize the possibility that “good” UX may be defined differently in different contexts. For example, the UX of a mobile banking application may be defined entirely by speed and security, two features that are likely not as critical for online museums. An assessment rubric focused explicitly on UX has never been created, but the flexibility and ease of use of rubrics make them well suited for assessing such a complicated concept.

3. Creating a user-experience rubric for online museum collections

Creating a rubric is neither trivial nor straightforward, but there is a general process that is recommended. To demonstrate this process, each step is described below in the context of developing a UX rubric for online museum collections.

3.1 Step 1: Identify purpose/goals

The first step is to identify the purpose and/or goals of the assessment. This decision will drive the selection of assessment criteria (step 3). In this case, the purpose of the rubric is to assess the UX quality of an online museum collection.

3.2 Step 2: Choose rubric type

The second step is to decide whether the rubric will be analytic or holistic. To provide a deeper level of feedback on various dimensions of online museum UX, an analytic rubric was selected.

3.3 Step 3: Identify the dimensions

The third step is to identify the dimensions of the rubric, which requires breaking down the product being evaluated into four to eight components (or more, if necessary) that are observable, important, and precise (O’Reilly & Cyr, 2006). There is no prescriptive method for choosing rubric dimensions, so rubric creators are encouraged to adopt a method that best fits their particular context.

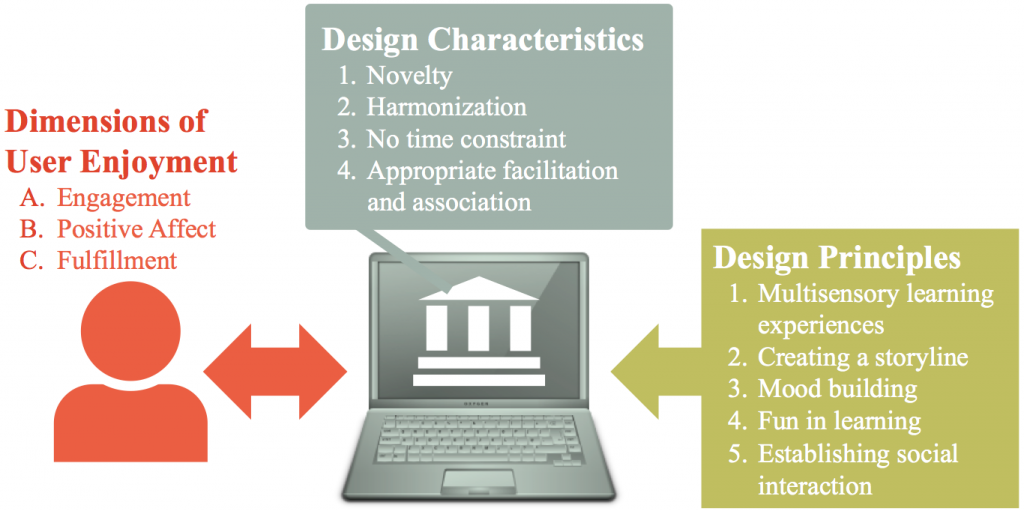

In an effort to consider as many viewpoints as possible, selecting dimensions for the UX rubric for online museum collections was iterative and utilized several different methods. The process began with a search of the academic and professional research literature to determine if there were any existing principles for designing online museum collections. This search did not yield any guidelines explicitly tied to UX, but it did uncover research by Lin, Fernandez, and Gregor (2012) in which they identified four design characteristics and five design principles of online museums that were associated with higher levels of user enjoyment (illustrated in figure 1).

Next, the author and a graduate research assistant collaboratively reviewed thirty-nine online museum collections with respect to these dimensions, discussing whether and how each collection utilized each one and providing a numerical rating (from 1 to 7, with 7 being the strongest) as an informal assessment of how well each collection demonstrated each dimension. Early in this process, one museum rose well above the others by excelling across nearly all nine dimensions: the Rjiksmuseum in Amsterdam (https://www.rijksmuseum.nl/en). However, when discussing more explicitly what made the Rijksmusem’s experience so positive, we discovered several limitations to Lin et al.’s framework and began developing a parallel set of dimensions that more closely matched our experience and were more observable and explicit. This process required numerous discussions and reflections about each dimension, which eventually helped to further improve the vocabulary, tighten the concepts, and determine more effectively the ability of each dimension to accurately measure an important aspect of UX. This new set of principles drew heavily from Lin et al.’s original research but also clarified several criteria (e.g., harmonization became balance of text and visual content) and added new concepts not covered previously (e.g., useful and appropriate metadata to aid discovery). Once the revised set of dimensions was determined, the dimensions were grouped into three categories inspired by Norman’s (2004) model of emotional design: visceral (which define whether a user will want to use the collection), behavioral (which define whether a user can use the collection), and reflective (which define whether a user will want to come back to use the collection). The final rubric dimensions, and their associated descriptions, are presented in table 2 at the end of this section.

3.4 Step 4: Choose a rating scale

The fourth step is to determine the number of rating-scale points, with typical rubrics using between two- and five-point rating scales (Huba & Freed, 2000). To provide moderate discriminatory power and encourage a neutral, non-judgmental language of assessment, this rubric uses a four-point scale with the labels Incomplete, Beginning, Developing, and Emerged.

3.5 Step 5: Write descriptors for each rating point

Finally, the fifth step is to write clear and well-defined gradations of quality for each rubric dimension. Goodrich (1997) notes the importance of using clear language when writing descriptors, not being unnecessarily negative, and making the quality gradations distinct and purposeful. For instance, a four-point rating scale should describe the ratings of quality as “Yes,” “Yes, but,” “No, but,” and “No.”

3.6 Final rubric

After creating an initial draft of the rubric, the author conducted a series of pilot studies with two graduate research assistants in which they independently applied the rubric to various museum collections and then came together to systematically discuss their ratings and reflect on the relevance, accuracy, and clarity of each descriptor. These discussions resulted in more revisions to the language used throughout the rubric as well as the addition of a tenth dimension (system reliability and performance) that was not captured previously. The final version of the rubric is presented in table 2.

|

Incomplete (1) |

Beginning (2) |

Developing (3) |

Emerged (4) |

|

| Visceral: Factors influencing immediate impact/impressions (e.g., will people want to use the collection?) | ||||

| Strength of Visual Content | Artwork is a peripheral component of the collection, with text the dominant visual element. Images, when present, are too small and low quality. Text is a major distraction from the visual content. | Artwork is not emphasized throughout the collection, and images are rarely the dominant visual element. Some images are too small and/or low quality. At times, text is too dense and distracts from the visual content. | Artwork is featured throughout the collection, but images are not always the dominant visual element. Most images are large and high quality. Text is used purposefully, but some is superfluous. | Artwork is presented as the primary focus of the collection, with images as the dominant visual element. All images are large and high quality. Text is used purposefully but sparingly to enhance the visual content. |

| Visual Aesthetics | Color, graphics, typography, and other non-interactive interface elements are used inharmoniously and inconsistently. Elicits negative affective reactions. | Color, graphics, typography, and other non-interactive interface elements are moderately harmonious or consistent. Elicits neutral or moderately positive affective reactions. | Color, graphics, typography, and other non-interactive interface elements are mostly harmonious with only minor inconsistencies. Elicits affective reactions that are generally positive. | Color, graphics, typography, and other non-interactive interface elements are harmonious and used consistently. Elicits affective reactions that are universally positive. |

|

Incomplete (1) |

Beginning (2) |

Developing (3) |

Emerged (4) |

|

| Behavioral: Factors influencing immediate interaction/usage (e.g., will people be able to use the collection?) | ||||

| System Reliability and Performance | The interface has several serious technical errors that prevent users from completing important tasks. There may be significant delays when loading many pages and/or interface elements. | The interface has some major technical errors that detract from the overall experience, but still allow users to complete tasks. There may be some delays when loading some pages and/or interface elements. | The interface has some minor technical errors that don’t detract from the overall experience. There may be a slight delay when loading some pages and/or interface elements. | The interface is fully functional and completely free of technical errors. The pages consistently load quickly, and all aspects of the interface respond immediately to user actions. |

| Usefulness of Metadata | Metadata structure is both too broad and too deep, which prevents users from finding and learning about artworks. Excludes some standard metadata facets and provides limited options to browse, search, or filter artworks. | Metadata structure is either far too broad or far too deep, which limits users’ ability to find and/or learn about artworks. Includes only standard metadata facets and traditional ways to browse, search, and filter artworks. | Metadata structure aids users in finding and learning about artworks. Includes all standard metadata facet(s) and some non-standard facets that offer a different way to browse, search, or filter artworks. | Metadata structure is purposefully designed to enhance users’ ability to find and learn about artworks. Includes novel metadata facets that offer innovative ways to browse, search, and filter artworks. |

| Interface Usability | Interface is not intuitive and requires substantial effort to learn. Several major interface elements are hidden and/or unnecessarily complex, which causes major usability issues. | Interface is somewhat intuitive but a distinct learning curve is apparent. Some interface elements are in unexpected places or are overly complex, causing minor usability issues. | Interface is mostly intuitive but has a slight learning curve. Interface elements require some memorization and/or trial and error but are generally easy to use and locate. | Interface is intuitive and accessible. Interface elements are easy to locate and easy to use, creating a seamless and immersive interaction between the user and the collection. |

| Support for Casual and Expert Users | Primarily provides advanced content and functionality for expert users, but implementation is poor. Use of advanced features is required but poses a substantial obstacle for both expert and casual users. Both advanced research and casual browsing are difficult or impossible. | Strong appeal to expert users through advanced content and functionality. Many advanced features are included by default, which supports expert users but may confuse casual users. Advanced research is emphasized, but casual browsing is difficult or impossible. | Strong appeal to casual users through basic content and functionality. Some advanced features are included by default, creating a minor obstacle for casual users but effective tools for expert users. Both advanced research and casual browsing are encouraged, with a slight learning curve for the latter. | Primarily provides basic content and functionality for casual users. Advanced features are visible but unobtrusive, which effectively supports expert users and encourages learnability for casual users. Allows for a seamless transition between casual browsing and advanced research. |

|

Incomplete (1) |

Beginning (2) |

Developing (3) |

Emerged (4) |

|

| Reflective: Factors influencing long-term interaction/usage (e.g., will people want to come back to use the collection?) | ||||

| Uniqueness of Virtual Experience | Virtual museum experience is limited compared to the physical museum experience. Finding and viewing virtual artworks has distinct disadvantages or limitations that would not be present in the physical museum. | Virtual museum experience is directly analogous to the physical museum experience. Finding and viewing virtual artworks offers nothing new or unique compared to being in the physical museum. | Virtual museum experience is different but still borrows from the physical museum experience. Finding and viewing virtual artworks offers something new and/or different that would be uncommon or unlikely in the physical museum. | Virtual museum experience is entirely different from the physical museum experience. Finding and viewing virtual artworks allows for new and insightful perspectives that would not be possible in the physical museum. |

| Openness | Users are not given any control over the content. Options for downloading, printing, and/or saving high-quality images are not provided. | Users are given minimal control over the content. Options for downloading, printing, and/or saving high-quality images are limited. | Users are given a moderate degree of control over the content. Options for downloading, printing, and/or saving high-quality images are present but may not be universal. | Users are given complete control over the content, with clearly marked options to download, print, and/or save high-quality images. |

| Integration of Social Features | Does not allow users to participate in a virtual community, with no involvement or contribution from the museum. Social tools are not integrated into the collection. Provides no options for sharing and communicating with others. | Allows limited participation in a virtual community, which includes minimal or insubstantial contributions from the museum. Social tools are barely visible and/or poorly integrated into the collection. Provides few options for sharing and communicating. | Allows for varying levels of participation within a virtual community, of which the museum is a passive participant. Social tools are present but not prominent. Provides some options for sharing and communicating with others. | Encourages varying levels of participation within a virtual community, of which the museum is an active participant. Social tools are prominently integrated into the collection. Provides multiple options for sharing and communicating with others. |

| Personalization of Experiences | Does not allow users to create personalized experiences. Options for customization, gaming, or personalization are non-existent. Users are entirely passive consumers with no meaningful control over their virtual museum experience. | Allows users to create semi-personalized experiences. Customization, gaming, or personalization features are limited and/or hidden. Users are mostly passive consumers with little control over their virtual museum experience. | Allows users to create personalized experiences with some limitations. Provides some customization, gaming, or other personalization features. Encourages users to actively influence their virtual museum experience. | Allows users to craft dynamic personal experiences with few, if any, limitations. Integrates robust customization, gaming, and/or other innovative personalization features. Inspires users to be active co-creators of their virtual museum experience. |

Table 2: The final UX rubric for online museum collections

4. Measuring rubric quality

Following the above process should ensure that a rubric is technically sound, but it’s still necessary to demonstrate the rubric’s quality. This process is also not an exact science, but it is generally recommended to examine three aspects of the rubric: its reliability, validity, and utility (O’Reilly & Cyr, 2006; Quinlan, 2012).

4.1 Study methodology

To test the quality of the UX rubric for online museum collections (presented in table 2), a study was conducted in which four experts—two museum professionals and two user experience professionals, each with more than five years of experience in their field—were asked to apply the rubric to three online collections offered by museums from various geographic locations (one in the United Kingdom, two in the United States). The study itself took approximately ninety minutes to complete and consisted of two parts. In part 1, participants received a brief overview of rubrics and then used the rubric to assess the three museum collections (presented in random order). In part 2, participants completed a brief survey and an informal interview about their perceptions of the rubric and their experience using it as an assessment tool. Three participants completed the study face to face (due to scheduling constraints, the fourth participant completed the study remotely over two days). All sessions were held in August and September 2014.

4.2 Study results

For convenience, the study results will be presented separately for each of the three aspects of rubric quality: reliability, validity, and utility.

4.2.1 Reliability

The reliability of a rubric describes the extent to which using the rubric provides consistent ratings of quality (O’Reilly & Cyr, 2006). For this rubric to be considered reliable, it will need to show high inter-rater reliability; that is, different raters should provide the same (or similar) ratings when applying the rubric to the same interface. One common approach for showing inter-rater reliability is using consensus agreements, or the percentage of raters with exact or adjacent agreement (Stellmack et al., 2009).

In this study, participants rated three museum collections on ten different dimensions, creating thirty potential opportunities for agreement. Following the procedure outlined in Stellmack et al. (2009), two estimates of agreement were calculated: conservative agreement was defined as all raters providing the exact same rating on a given dimension, and liberal agreement was defined as all raters being within one rating point from each other on a given dimension. Of the thirty opportunities for agreement, four (13.3 percent) met the criteria for conservative agreement while nineteen (63.3 percent) met the criteria for liberal agreement. While these results are statistically significant (p < 0.001 for both), both percentages are below what is generally viewed as acceptable (typically at or above 30 percent for conservative agreement and at or above 80 percent for liberal agreement). In other words, the levels of agreement are unlikely due to chance alone (i.e., using the rubric is better than blind guessing), but there is still plenty of room for improvement.

However, the data tell a much different story when looking at the UX experts and museum experts separately, as the agreement within both groups met or exceeded the acceptable thresholds (see table 3). Higher levels of agreement were expected (getting two people to agree is significantly easier than getting four people to agree), but the size of the improvement is notable.

In summary, the study results show this rubric can be considered a reliable instrument, but only when used by evaluators who share a common disciplinary background and professional focus. To better understand why, it may be helpful to examine the results related to the rubric’s validity.

|

Participant Type |

Conservative Agreement |

Liberal Agreement |

| User Experience (2) | 9 / 30 (30.0%) | 24 / 30 (80.0%) |

| Museum (2) | 14 / 30 (46.7%) | 28 / 30 (96.3%) |

| Combined (4) | 4 / 30 (13.3%) | 19 / 30 (63.3%) |

Table 3: consensus agreement by participant type

4.2.2 Validity

The two most common types of rubric validity are content validity, or the extent to which the rubric measures things that actually matter, and construct validity, or whether the rubric actually measures the construct it is supposed to measure (Roblyer & Wiencke, 2003; Jonsson & Svingby, 2007).

The recommended approach for demonstrating content validity is to solicit feedback from content-area experts during the rubric creation process. However, due to the novelty of this particular type of rubric and the well-known ambiguity of UX as a concept, content validity was instead measured by asking study participants to rate the perceived relevance of each rubric dimension on a scale of 1 to 7, where 1 is least relevant and 7 is most relevant. As shown in figure 2, the visceral and behavioral dimensions of the rubric were viewed as highly relevant across the board, but perceptions of the reflective dimensions were mixed, showing vast differences of opinion between the two groups.

The disciplinary background and professional focus of the participants seemed to be a driving force behind these results, with differences likely stemming from how UX and museum professionals view the challenge of engaging with online visitors (as one of the UX professionals noted, the rubric “may be confusing what museums think are important with what users think is important.”). Specifically:

- Uniqueness of Virtual Experience. While the UX and museum experts rated this dimension differently, the opinions they expressed in the post-study interview were actually quite similar: that the concept was seen as relevant but there were problems with the term “uniqueness” (as one UX expert noted, “Uniqueness is not a virtue itself.”). Instead, participants felt this concept was better expressed as “Enhancement of Physical Museum Experience” in that “the virtual medium should mirror the physical museum” and support the museum’s overall vision rather than be totally unique from it.

- Openness. Openness was the only dimension that UX experts rated higher than the museum experts, and it’s easy to see why: UX professionals have little or no working knowledge of dealing with the issues associated with publishing digital versions of copyrighted materials, and thus were free to imagine what an ideal collection should look like. Not surprisingly, the museum professionals were more realistic about the organizational, practical, and legal constraints of providing a truly “open” collection, leading to lower ratings of relevance for this dimension.

- Integration of Social Features and Personalization of Experiences. For museum experts, these two concepts were seen as necessary for driving engagement through repeat visits, while UX experts viewed them as “things museums care about but aren’t an actual problem that users worry about” (according to one UX expert). Importantly, neither of the UX experts had ever used any social or personalization features provided by a museum website (one remarked that they “had never seen personalization done well” in a museum context), and each had difficulty envisioning scenarios in which they would do so.

Despite these differences of opinion, none of the study participants could think of any concepts or interface elements that were not already captured by the rubric, which suggest moderate to strong levels of content validity for the rubric as a whole. In other words, the issues raised about the reflective dimensions may not be indicators of low validity but instead of the widespread uncertainty about the goals and purpose of online museum collections and the most effective ways to engage with online visitors. Additional research in these areas can shed light on these issues and, ultimately, strengthen the content validity of the reflective dimensions of the rubric.

The second aspect of validity, construct validity, is typically measured by showing a high correlation between the rubric scores and another accepted measure of quality. Again, the novelty of this type of assessment and the lack of an established measure of “User Experience Quality” prevented this type of direct analysis. Instead, study participants were asked whether they believed the results of the rubric reflected the actual quality of the online collection and whether they believed the rubric was clear and easy to understand. This analysis is imperfect, but the results (shown in figure 3) combined with qualitative feedback from the post-study interviews suggest high levels of perceived construct validity among both museum and UX professionals (although there is an obvious need to make the language less museum-centric).

While this presents an opportunity for additional research in this area, particularly in calibrating the reflective dimensions and making the language more accessible to non-museum experts, overall the study results are positive indications that the rubric is a valid instrument.

4.2.3 Utility

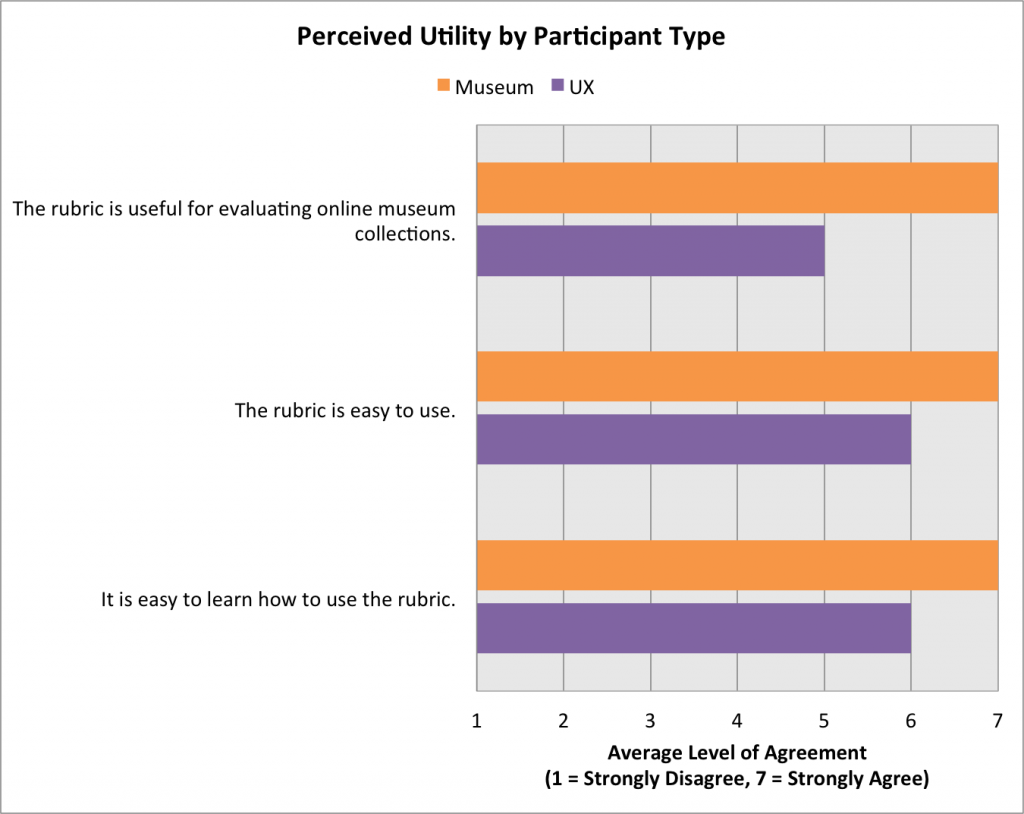

The utility of a rubric is arguably its most complex and most important quality and is typically addressed by measuring its impact on the assessment process. However, measuring the actual impact of a rubric is nearly impossible due to the overwhelming number of confounding factors, so it is recommended to consider the perceived impact instead: that is, the extent to which evaluators think the rubric to be useful, usable, and learnable (Makri et al., 2011). As shown in figure 4, both groups of study participants agreed that the rubric was useful, easy to use, and easy to learn.

These ratings were confirmed by the post-study interviews, with all four participants affirming the utility of the rubric as an assessment instrument. From a practical perspective, one of the UX participants observed that the rubric seems like a great tool to “help museums figure out how to use their digital budget.” To illustrate this concept, the rubric scores for each museum were averaged across all four participants (to capture the views of both UX and museum experts) and plotted on a radar diagram. This diagram provides a clear visual indicator of the online collection’s strengths and weaknesses compared to the “ideal” collection, which allows museum staff to make more-informed choices when defining their priorities and when setting realistic expectations for improvement. A sample radar diagram for Museum A (located in the United Kingdom) is presented in figure 5.

The utility of the rubric can be further enhanced by including more detailed feedback on specific features or aspects of the collection and any other comments or recommendations that arise while the experts complete their assessment. This aspect of utility will be examined in a forthcoming study.

5. Conclusion and future work

With museums intent on making their collections available and accessible via the Web, it is imperative that the resulting interfaces are designed to maximize engagement and provide a positive user experience. To help museums achieve this goal, this paper described the process of developing and evaluating a UX rubric for online museum collections. Following procedures recommended by educators, the iteratively developed rubric consists of ten dimensions split into three aspects of UX (visceral, behavioral, and reflective). Next, the quality of the rubric was confirmed through a study involving four experts—two UX professionals and two museum professionals—who were asked to apply the rubric to three online museum collections. While the results of the study suggested several improvements were necessary to increase its accessibility and appeal to non-museum experts, there were also several positive indicators that the rubric is a reliable, valid, and useful assessment instrument. Future work will address the limitations identified by the study participants (e.g., clarifying museum-specific language, examining the reflective dimensions of UX) and more directly examine the practical applicability of the rubric through an applied case study with a museum partner. However, this paper presents strong evidence that, in its current form, the UX rubric for online museum collections presented in table 2 can provide valuable guidance for museums interested in improving their users’ experience with their online collection.

Acknowledgments

The author would like to thank Conrad Lochner, whose deep insights about online museum experiences inspired this research and led to the initial set of rubric criteria, and Seth Persons and Samantha Raddatz, whose thoughtful critiques and concrete suggestions were crucial in refining and clarifying the rubric categories.

References

Arter, J., & J. McTighe. (2001). Scoring Rubrics in the Classroom: Using Performance Criteria for Assessing and Improving Student Performance. Thousand Oaks, CA: Corwin Press.

Bargas-Avila, J. A., & K. Hornbæk. (2011). “Old Wine in New Bottles or Novel Challenges? A Critical Analysis of Empirical Studies of User Experience.” In Proceedings of the 2011 ACM Conference on Human Factors in Computing Systems (CHI 2011). New York: ACM Press. 2689–2698.

Fantoni, S. F., & R. Stein. (2012). “Exploring the Relationship between Visitor Motivation and Engagement in Online Museum Audiences.” In J. Trant & D. Bearman (eds.). Museums and the Web 2012: Proceedings. Toronto: Archives & Museum Informatics.

Goodrich, H. (1997). “Understanding rubrics.” Educational Leadership 54(4), 14–17.

Gorgels, P. (2013). “Rijksstudio: Make Your Own Masterpiece!” In N. Proctor & R. Cherry (eds.). Museums and the Web 2013: Proceedings. Silver Spring: Museums and the Web, 2013. Available http://mw2013.museumsandtheweb.com/paper/rijksstudio-make-your-own-masterpiece/

Hafner, J. C., & P. M. Hafner. (2003). “Quantitative Analysis of the Rubric as an Assessment Tool: An Empirical Study of Student Peer-Group Rating.” International Journal of Science Education 25(12), 1509–1528.

Hassenzahl, M., & N. Tractinsky. (2006). “User experience – a research agenda.” Behaviour & Information Technology 25(2), 91–97.

Haynes, J., & D. Zambonini. (2007). “Why Are They Doing That!? How Users Interact With Museum Web sites.” In J. Trant & D. Bearman (eds.). Museums and the Web 2007: Proceedings. Toronto: Archives & Museum Informatics.

Huba, M. E., & J. E. Freed. (2000). Learner-Centered Assessment on College Campuses: Shifting the Focus From Teaching to Learning. Needham Heights, MA, USA: Allyn & Bacon. http://www.archimuse.com/mw2007/papers/haynes/haynes.html

Jones, K. B. (2007). “The transformation of the digital museum.” In P. F. Marty and K. B. Jones (eds.). Museum informatics: People, information, and technology in museums. New York: Routledge, 9–25.

Jonsson, A., & G. Svingby. (2007). “The use of scoring rubrics: Reliability, validity and educational consequences.” Educational Research Review 2(2), 130–144.

Law, E. L., V. Roto, M. Hassenzahl, A. Vermeeren, & J. Kort. (2009). “Understanding, scoping and defining user experience: a survey approach.” In Proceedings of the 2009 ACM Conference on Human Factors in Computing Systems (CHI 2009). New York: ACM Press. 719–728.

Lin, C. H., W. Fernandez, & S. Gregor. (2012). “Understanding web enjoyment experiences and informal learning: A study in a museum context.” Decision Support System 53(4), 846–858.

MacDonald, C. M., & M. E. Atwood. (2013). “Changing perspectives on evaluation in HCI: Past, present, and future.” In 2013 Extended Abstracts on Human Factors in Computing Systems (CHI 2013). New York: ACM Press. 1969–1978.

Makri, S., A. Blandford, A. L. Cox, S. Attfield, & C. Warwick. (2011). “Evaluating the Information Behaviour methods: Formative evaluations of two methods for assessing the functionality and usability of electronic information resources.” International Journal of Human-Computer Studies 69(7-8), 455–482.

Norman, D. (2004). Emotional Design: Why We Love (or Hate) Everyday Things. Cambridge, MA: Basic Books.

O’Reilly, L., & T. Cyr. (2006). Creating a Rubric: An Online Tutorial for Faculty. University of Colorado, Denver. Available http://www.ucdenver.edu/faculty_staff/faculty/center-for-faculty-development/Documents/Tutorials/Rubrics/index.htm

Quinlan, A. M. (2012). A Complete Guide to Rubrics: Assessment Made Easy for Teachers of K-College, second edition. Lanham, MD: Rowman & Littlefield Education.

Rayward, W. B., & M. B. Twidale. (1999). “From Docent to Cyberdocent: Education and Guidance in the Virtual Museum.” Archives and Museum Informatics 13(1), 23–53.

Roblyer, M. D., & W. R. Wiencke. (2003). “Design and Use of a Rubric to Assess and Encourage Interactive Qualities in Distance Courses.” The American Journal of Distance Education 17(7), 77–98.

Stellmack, M. A., Y. L. Konheim-Kalkstein, J. E. Manor, A. R. Massey, & J. A. P. Schmitz. (2009). “An Assessment of Reliability and Validity of a Rubric for Grading APA-Style Introductions.” Teaching of Psychology 36(2), 102–107.

Stevens, D. D., & A. J. Levi. (2013). Introduction to Rubrics: An Assessment Tool to Save Grading Time, Convey Effective Feedback, and Promote Student Learning, second edition. Sterling, VA: Stylus Publishing.

Turley, E. D., & C. W. Gallagher. (2008). “On the ‘Uses’ of Rubrics: Reframing the Great Rubric Debate.” The English Journal 97(4), 87–92.

van Dijk, E., A. Lingnau, & H. Kockelkorn. (2012). “Measuring enjoyment of an interactive museum experience.” In Proceedings of the 14th ACM international Conference on Multimodal Interaction (ICM ’12). New York: ACM Press. 249–256.

Cite as:

. "Assessing the user experience (UX) of online museum collections: Perspectives from design and museum professionals." MW2015: Museums and the Web 2015. Published February 1, 2015. Consulted .

https://mw2015.museumsandtheweb.com/paper/assessing-the-user-experience-ux-of-online-museum-collections-perspectives-from-design-and-museum-professionals/